source. The Kafka project introduced a new consumer API between versions 0.8 and 0.10, so there are 2 separate corresponding Spark Streaming packages available. The API provides one-to-one mapping between Kafka's partition and the DStream generated RDDs partition along with access to metadata and offset.

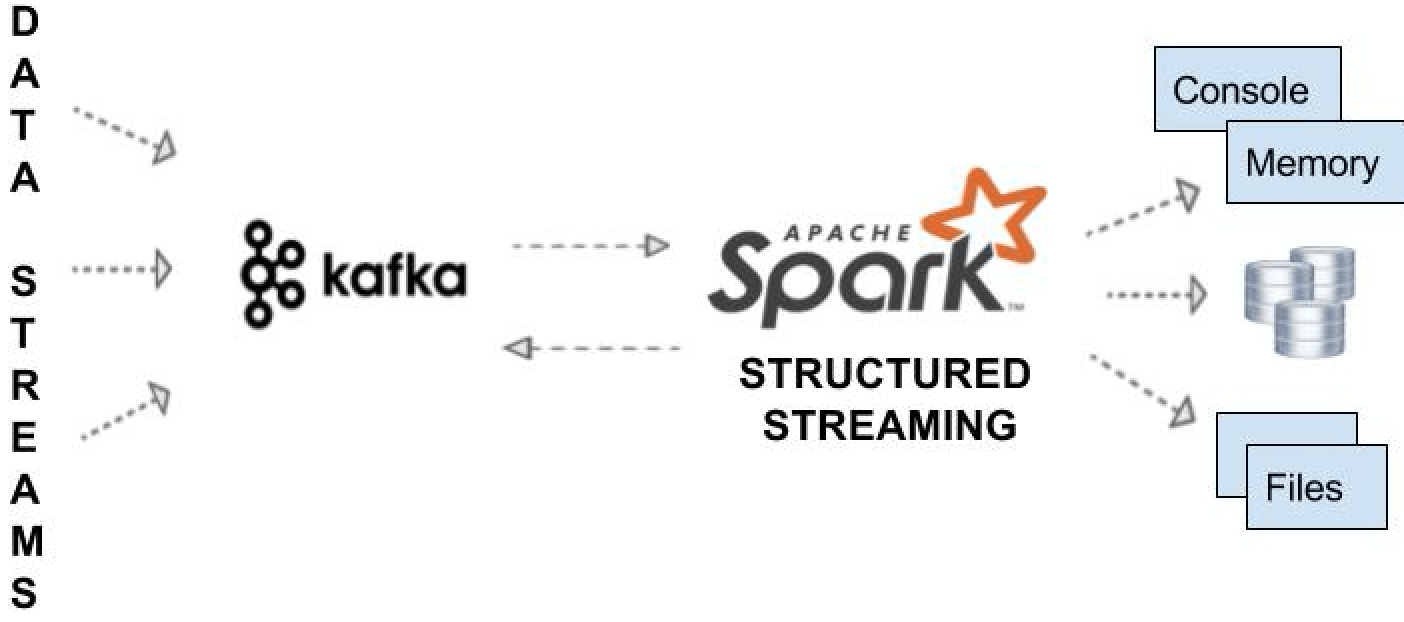

The following diagram shows end-to-end integration with Kafka, consuming messages from it, doing simple to complex windowing ETL, and pushing the desired output to various sinks such as memory, console, file, databases, and back to Kafka itself.

Following set of properties will need to be added to Spark Streaming API to integrate Kafka with Spark as a Source

bootstrap.servers: This describes the host and port of Kafka server(s) separated by a comma.

key.deserializer: This is the name of the class to deserialize the key of the messages from Kafka.

value.deserializer: This refers to the class that deserializes the value of the message.

group.id: This uniquely identifies the group of consumer.

auto.offset.reset: This is used messages are consumed from a topic in Kafka, but does not have initial offset in Kafka or if the current offset does not exist anymore on the server then one of the following options helps.